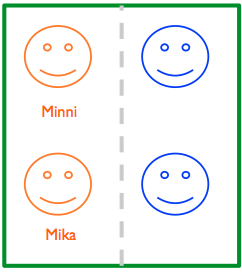

The school is over, and so is the extensive studies (5 classes, total of circa 75 students) conducted in Hämeenlinna have come to end. The idea was that the classes used a hacked version of a backchannel tool where we split them to two groups, condition A and condition not A. This way we could compare conditions in the same context and make an intelligent guess on on the impact of the feature A to their discussion.

Much of this work was inspired by the wild movement in the human-computer interaction field (e.g, Brown et al, 2011), who argue that instead of a laboratory study, the research should be conducted in reality, real places, real people and real stuff. On the other hand, the research methodology was based quasi-experimentation (see Oulasvirta, 2012; Stroker, 2010). Compared to traditional laboratory based study, in quasi-experimentation the control is more relaxed: for example, you just decide that half of the people need to be part of group A and half of them part of group not A; but don’t try to control who belongs to where.

The end result is that there were some problems, and the condition structure failed. In total we had around 2 000 messages, of which 1 800 belong to group A and only 200 in group not A. Also the level of posters is different, even while I double confirmed that the groups are indeed divided in half and half.

Lessons learned and next steps

Running experiments can be hard. In the future, I would myself try avoiding as extensive studies as this, and introduce a bit more control or oversight, even while it threads then the validity (fitness to real life) of the study.

I’m hoping to make a third try using this setup with someone, at some point of time. Also, we’ll try to scrap something interesting out of the data even while the condition system failed.

More details on my personal blog.